EntropyShield

Link to open source: https://github.com/DUKartik/EntropyShield

Link to Live Project: https://entropy-frontend-808108840598.asia-south1.run.app/

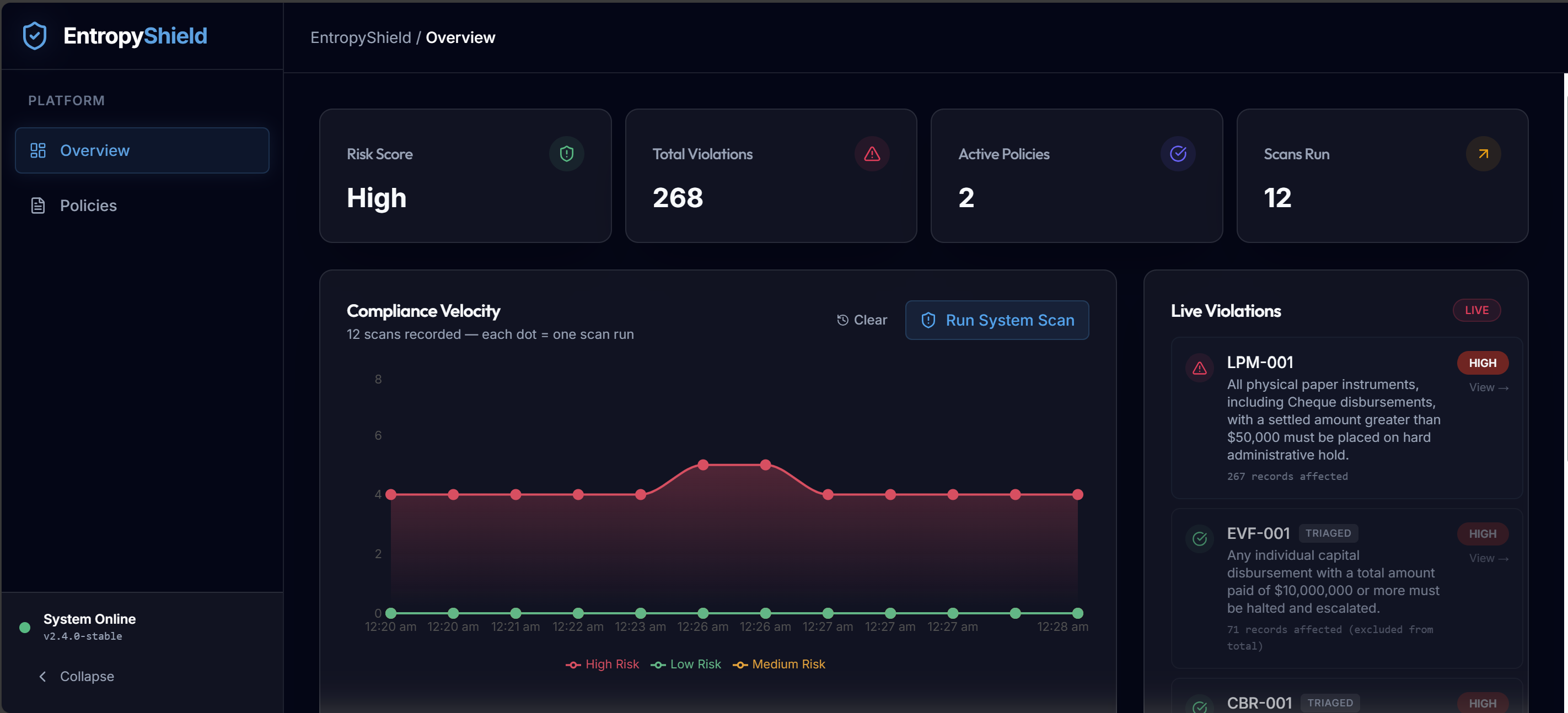

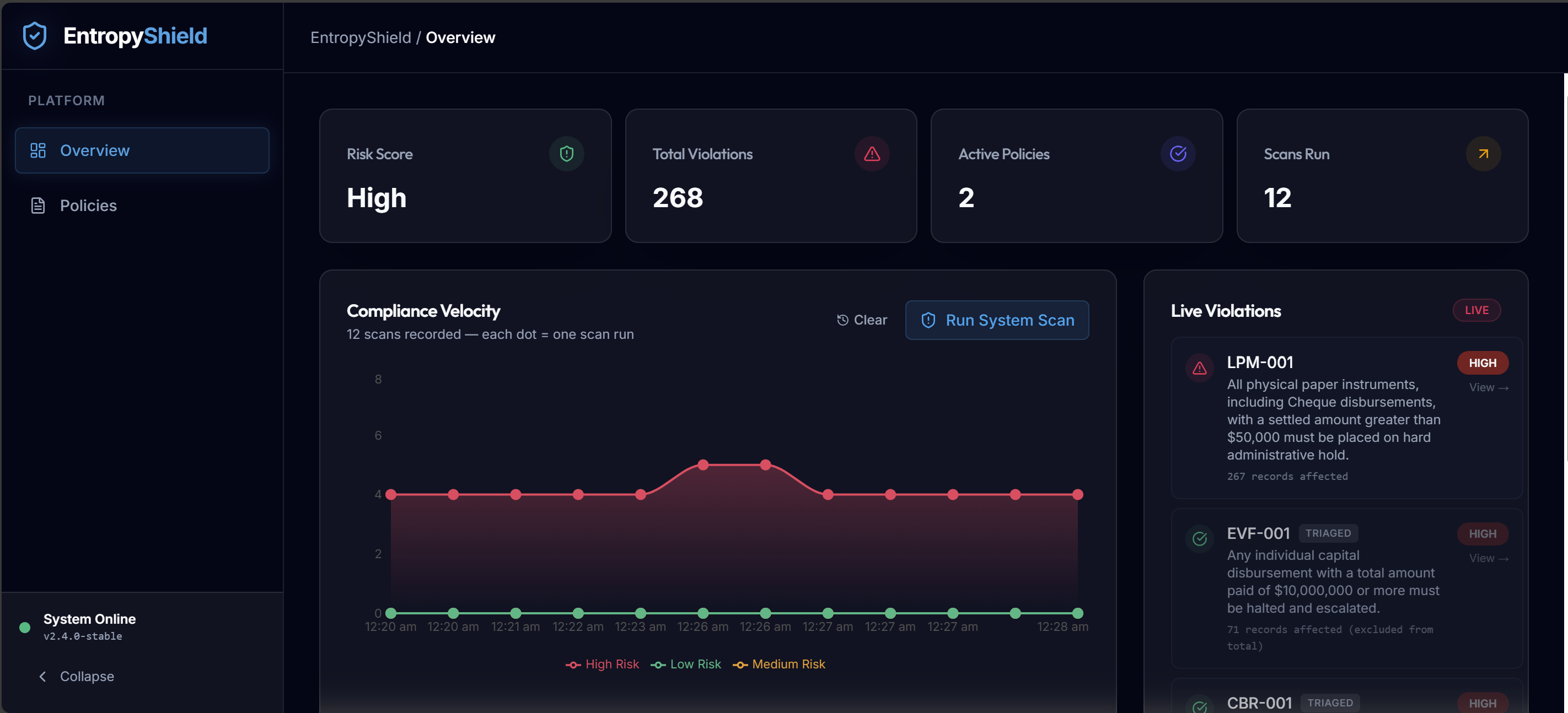

EntropyShield is an intelligent, automated compliance engine. It solves the costly "compliance gap" where corporate rules live in static, unstructured documents (like PDFs and contract memos), while the actual operational data they govern lives in fast-moving structured databases. EntropyShield bridges this gap by acting as an AI Data Engineer—translating free-text policies into executable software logic.

Core Goals:

- Automate Rule Extraction: Use Generative AI (Google Gemini) to read free-text policy documents and autonomously generate deterministic SQL queries that capture the exact compliance rules.

- Ensure Continuous Coverage: Run these generated queries against live company databases 24/7, catching 100% of policy violations in real-time instead of relying on manual, periodic audits.

- Guarantee Document Trust: Prove that the uploaded policy PDFs are authentic check.

- Enable Explainable Triage: Provide compliance officers with a live dashboard to review violations. Every flag includes the exact policy quote that triggered it, alongside a robust human-in-the-loop audit trail for approving or rejecting specific records.

Detailed Workflow Architecture

Phase 1: Policy Ingestion & AI Interpretation The first step of the system is bridging the gap between human language and database logic.

- Document Upload: Users upload a free-text policy document (e.g., an Anti-Money Laundering protocol) via the frontend.

- Agentic Rule Extraction (Gemini 1.5 Pro): The backend sends the raw PDF to Google Vertex AI. We use a sophisticated agentic loop where the LLM is instructed to act as a Data Engineer.

- SQL Generation & Validation Loop:

- The LLM reads the unstructured text and extracts distinct rules.

- It is provided with the live schema of the company's internal operational database.

- For each extracted rule, the LLM generates a SQL query designed to target violating rows.

- Before returning its response, the LLM calls an internal tool (

validate_sql) to test its generated query against the actual database schema. If the SQL syntax is wrong or uses non-existent tables, the backend returns the error to the LLM, forcing it to try again until the query executes successfully.

- Persistent Storage: Once validated, the pure SQL rules—paired with the exact quotes from the PDF for explainability—are stored permanently in a

policies tracking table.

Phase 2: Autonomous Compliance Monitoring Once the unstructured text has been securely translated into deterministic SQL, the system actively guards the data.

- The Background Sentinel: A backend service (

compliance_monitor.py) periodically scans the live operational database where normal business transactions (like wire transfers or expense reports) are occurring.

- Executing the Rules: The engine pulls all active policies and runs the associated SQL queries generated by Gemini.

- Identifying Flags: Any row returned by these SQL queries is immediately flagged as a compliance violation (e.g., catching a high-value cross-bank wire transfer that violates a newly uploaded policy).

Phase 3: The Live Triage Dashboard (Human-in-the-Loop) Finding violations is only half the battle; the system provides a robust UI for compliance officers to manage them.

- Real-Time Data Viewer: The frontend React dashboard instantly surfaces the flagged rows in a Live Event Stream.

- Explainable AI (XAI): When an officer clicks on a flag, they aren't just given a database row. The UI displays the Policy Basis—the exact quote from the original PDF that generated the rule. This eliminates ambiguity as to why the system blocked the data.

- Granular Record-Level Review: The system supports deep-dive triage. If a rule flags 50 records, the officer can expand the table, individually select 5 specific records that are actually false positives (or approved exceptions), and hit "Reject/Approve selected".

- Dynamic Data Filtering: The remaining 45 records stay in the active KPI count, ensuring the dashboard perfectly reflects un-triaged risk.

Phase 4: Audit Trails & Reporting Every action taken by the Human-in-the-Loop is durably recorded for accountability.

- The

audit_logs Schema: Whenever an officer approves or rejects a specific record-level violation, that action (along with their justification note and timestamp) is permanently inserted into theaudit_logs table in the database.

- Rule Exclusion: During the next background sweep (Phase 2), the compliance monitor actively subtracts any specifically approved records found in the audit logs.

- Audit-Ready Extraction: Because decisions are safely written back to the relational database, generating a forensic report for an external auditor or regulator simply requires running a standard

SELECT *across the audit history.

This build was uploaded as a hackathon project