SAH_SyntaxX

Link to open source: https://drive.google.com/file/d/1gSQ71yumtPfE1_jhy9YM6XWUKK_2pVF9/view?usp=drive_link

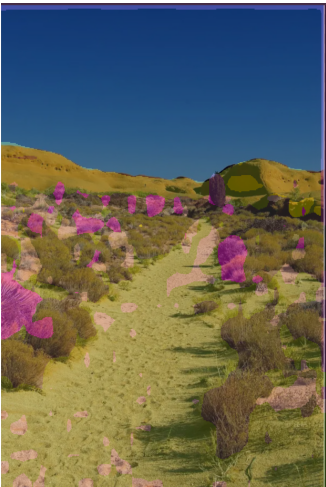

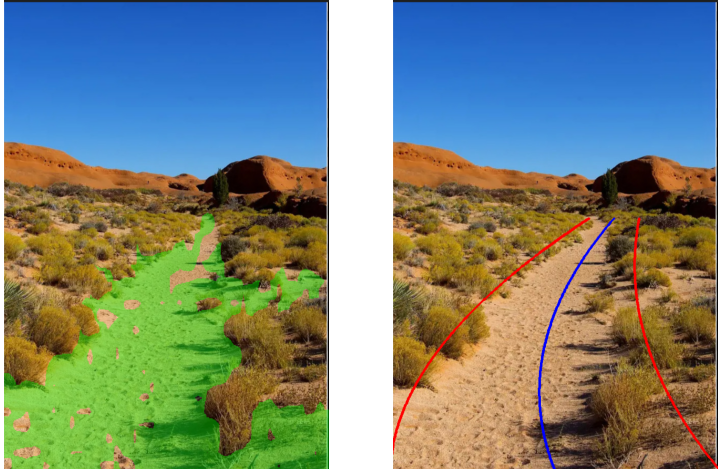

This project presents a deep learning–based off-road terrain segmentation and navigable path extraction system built using the DINOv2 Vision Transformer (ViT-B/14). The system performs pixel-wise semantic segmentation on off-road terrain images and intelligently extracts smooth navigable lane guidance suitable for autonomous vehicle applications.

A pretrained DINOv2 backbone was leveraged to capture high-level visual representations of complex terrain patterns. A custom convolutional decoder was designed to generate dense segmentation maps for 10 terrain classes. To improve segmentation quality and handle class imbalance, a combined loss function consisting of Cross-Entropy, Dice Loss, and Focal Loss was implemented.

The trained model achieved a Mean Intersection over Union (mIoU) of approximately 0.60 on the validation dataset. Post-processing techniques such as connected component analysis and polynomial curve fitting were applied to extract the largest drivable region and generate smooth left and right boundary curves along with a center navigation path.

This build was uploaded as a hackathon project